Archives: Building Oracle Cloud infrastructure with Terraform – Basics

I thought I’ll start exploring Oracle Cloud offerings a little and try building something with Terraform.

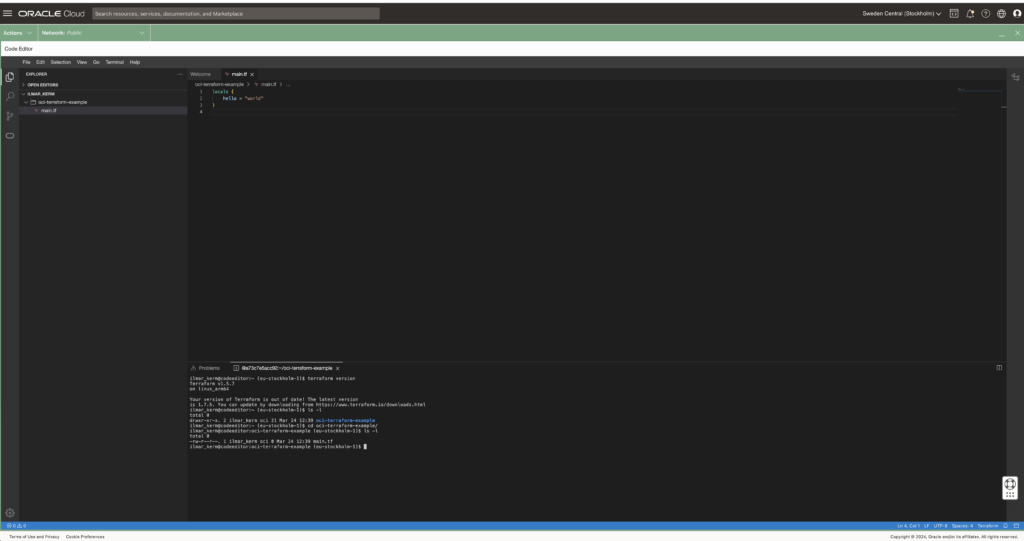

The execution environment

OCI Could Console offers Cloud Shell and Code Editor right from the browser. Cloud Shell is a small Oracle Linux container with shell access, that has the most popular cloud tools and OCI SDKs already deployed. Most importantly, however, all Oracle Cloud API commands you execute from there, they run silently as yourself, no additional setup required. Including setting up terraform. Pretty awesome idea I would say – no need to set up any admin computer first.

Since I would mainly write code, I’m going to use only only Code Editor (which is actually VS Code in your browser) and VS Code also has a built in terminal for executing commands.

Read about executing and using Cloud Shell here.

Setting up Terraform provider

When executing from Cloud Shell / Code Editor, then setting up the terraform provider is very simple.

# versions.tf

provider "oci" {

region = "eu-stockholm-1"

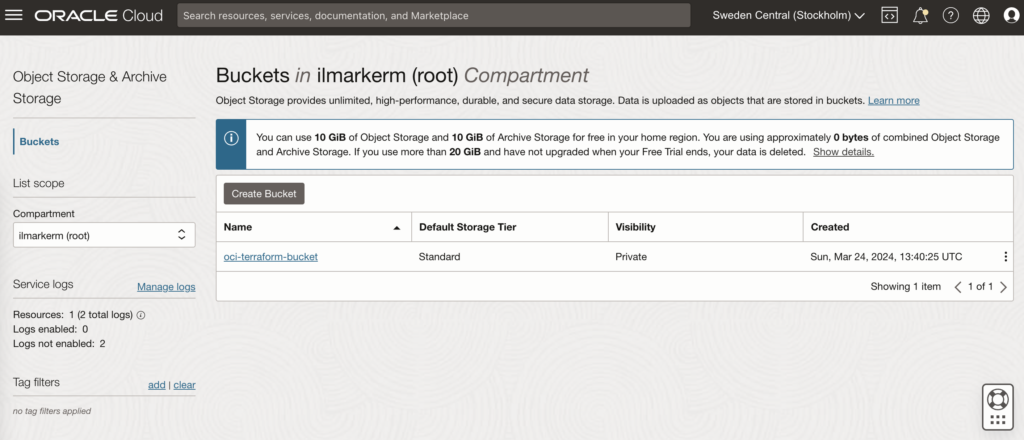

}It is very good practice to also place terraform state file in the shared object store. OCI also provides an object store and to set it up first create a Bucket in Object Storage.

This also requires setting up Customer Secret Keys, for accessing the bucket using S3 protocol. I’m going to save my access key and secret access key in a file named bucket.credentials.

# bucket.credentials

[default]

aws_access_key_id=here is your access key

aws_secret_access_key=here is your secret access key

# remote_state.tf

terraform {

backend "s3" {

bucket = "oci-terraform-bucket"

key = "oci-terraform.tfstate"

region = "eu-stockholm-1"

# ax9u97qgbo5h is the namespace of the bucket, it is shown in the Bucket Details page

endpoint = "https://ax9u97qgbo5h.compat.objectstorage.eu-stockholm-1.oraclecloud.com"

shared_credentials_file = "bucket.credentials"

skip_region_validation = true

skip_credentials_validation = true

skip_metadata_api_check = true

force_path_style = true

}

}Creating compartment and basic networking

Compartment is just a handy hierarchical logical container which helps to organise your Oracle Cloud resources better. It can also be used to set common tags for all resources created under it.

# main.tf

locals {

tenancy_id = "ocid1.tenancy.oc1..aaaaaaaawf2fv3ipfdp564ffiqpfqr6u6n3uofydgtihq3wget5357lq5i6a"

environment = "dev"

}

# Information about current tenancy, for example home region

data "oci_identity_tenancy" "tenancy" {

tenancy_id = local.tenancy_id

}

# Get the parent compartment as a terraform object

data "oci_identity_compartment" "parent_compartment" {

# Top get list of existing compartments execute:

# oci iam compartment list

id = data.oci_identity_tenancy.tenancy.id

}

# Create compartment

resource "oci_identity_compartment" "compartment" {

# Compartment_id must be the parent compartment ID and it is required

compartment_id = data.oci_identity_compartment.parent_compartment.id

description = "oci-terraform experiments"

name = "oci-terraform-experiments"

# Define some default tags that are added to all resources created under this compartment

freeform_tags = {

"deployed_by" = "terraform"

"environment" = local.environment

}

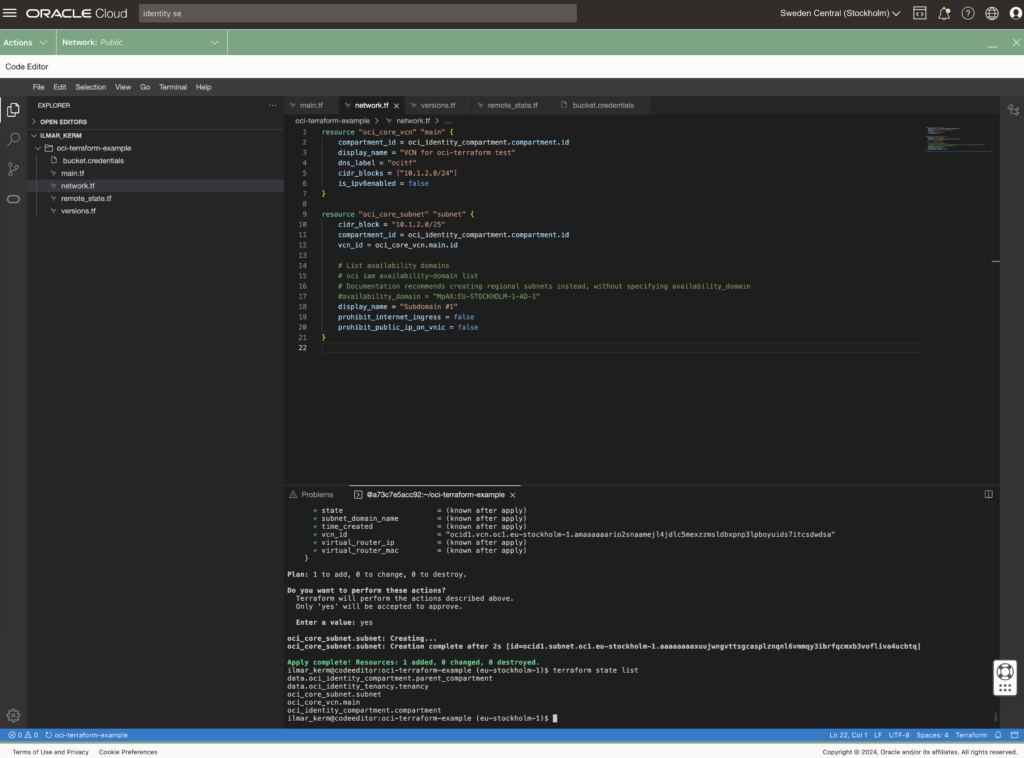

}To set up networking, first you need VCN Virtual Cloud Network and under it subnets.

# network.tf

resource "oci_core_vcn" "main" {

compartment_id = oci_identity_compartment.compartment.id

display_name = "VCN for oci-terraform test"

dns_label = "ocitf"

cidr_blocks = ["10.1.2.0/24"]

is_ipv6enabled = false

}

resource "oci_core_subnet" "subnet" {

cidr_block = "10.1.2.0/25"

compartment_id = oci_identity_compartment.compartment.id

vcn_id = oci_core_vcn.main.id

# List availability domains

# oci iam availability-domain list

# Documentation recommends creating regional subnets instead, without specifying availability_domain

#availability_domain = "MpAX:EU-STOCKHOLM-1-AD-1"

display_name = "Subdomain #1"

prohibit_internet_ingress = false

prohibit_public_ip_on_vnic = false

}

To be continued

I don’t really know where this post series is going. I’ve done quite a bit of Terraforming in AWS, so here I’m just exporing what Oracle Cloud has to offer and instead of using the dreaded ClickOps, I’ll try to be proper with Terraform.

At the end of the post I have these resources created.

Archives: Efficient test database refresh using Data Guard Snapshot Standby, Multitenant and storage snapshots

There are many different ways to refresh test database with production data. Here I’m going to describe another one, that might be more on the complex side, but the basic requirements I had are:

- Data must be cleaned before opening it up for testing. Cleaning process is extensive and usually takes a few days to run.

- Switching testing from old copy to a new copy must be relatively fast. Also if problems are discovered, we should be able to switch back to the old copy relatively fast.

- Databases are large, hundreds of terabytes, so any kind of data copy over network (like PDB cloning over database link) would take unacceptable amount of time and resources.

- There is no network connection between test database network and production database network, so PDB cloning over database link is not possible anyway.

- The system should work also with databases that have a Data Guard standby with Fast-Start Failover configured.

Any kind of copying the data will not work, since the databases are really large – so some kind of storage level snapshot is needed – and we can use a modern NAS system (that databases utilise over NFS) that can do snapshots and thin clones. Although not in multiple layers.

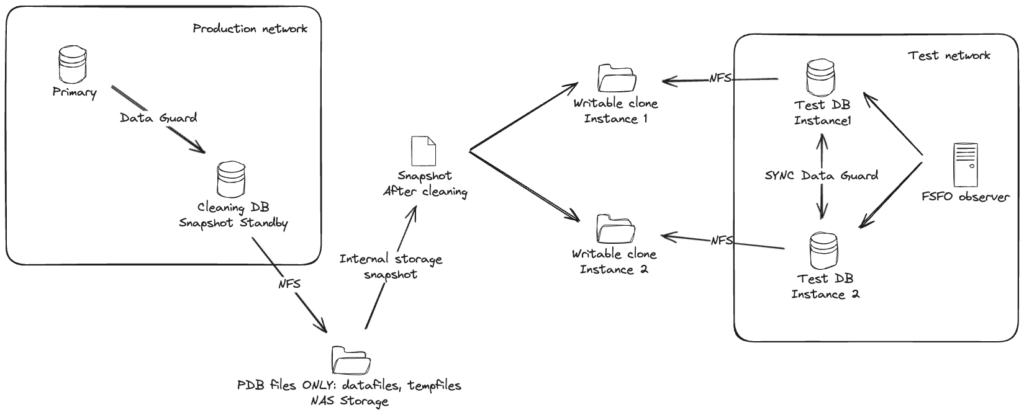

Here is one attempt to draw out the system. With a more complicated case where the test database actually has two database instances, in Data Guard Fast-Start Failover mode.

Basically:

- There is a special Data Guard standby for the cleaning process. This standby is using an external NAS storage for storing all PDB datafiles and tempfiles. No CDB files, like CDB datafiles, online logs, standby logs or recovery area should be stored in that NAS folder – keep CDB files separate.

- To start the cleaning process, put the cleaning standby in Snapshot Standby mode (instructions are here) and run all cleaning processes and scripts on the snapshot standby. After cleaning is finished successfully, create PDB metadata XML files and shut down the snapshot standby.

- Create storage level snapshot of the NAS folder storing the PDB files.

- Restore Snapshot Standby back to being a normal physical standby.

- Create writable clone from the same storage snapshot for the test database. If the test database consists of more Data Guard databases, then create a clone (from the same snapshot) for each one of them and mount them to the test database hosts.

- On the test database create a new pluggable database as clone (using NOCOPY to avoid any data movement). In case of Data Guard, do not forget to set standby_pdb_source_file_directory so the standby database would know where to find the data files of the plugged in database.

Done. Currently both old and new copy are attached to the same test CDB as independent PDBs, when you are happy with the new copy, just direct testing traffic to the new PDB. Then old one is not needed, drop it and clean up the filesystems.

Sounds maybe too complex, but the whole point is to avoid moving around large amounts of data. And key part of support rapid switching to the new copy and supporting Data Guard setups (on the test environment side) is keeping the test database CDB intact.

The steps

I have a post about using Snapshot Standby, you can read it here. All the code below is for the experts…

The preparation

Big part of the system is preparing the Cleaning DB that will be used for Snapshot Database.

Here I use the following paths:

- /oradata – Location for standby database CDB files, online logs, standby logs, recovery area

- /nfs/pdb – NFS folder from external NAS, that will ONLY store all PDB datafiles and tempfiles

# Parameters

NAME TYPE VALUE

------------------------------------ ----------- ------------------------------

db_create_file_dest string /nfs/pdb

db_create_online_log_dest_1 string /oradata

db_recovery_file_dest string /oradata

db_recovery_file_dest_size big integer 10T

# Datafiles

CON_ID NAME

---------- --------------------------------------------------------------------------------------------------------------------------

1 /oradata/PROD1MT101CL/datafile/o1_mf_audit_tb__0d68332o_.dbf

1 /oradata/PROD1MT101CL/datafile/o1_mf_sysaux__0d5fjj5y_.dbf

1 /oradata/PROD1MT101CL/datafile/o1_mf_system__0d4zdst4_.dbf

1 /oradata/PROD1MT101CL/datafile/o1_mf_undotbs1__0d5yw58n_.dbf

1 /oradata/PROD1MT101CL/datafile/o1_mf_users__0d65hljg_.dbf

2 /oradata/PROD1MT101CL/C24DD111D3215498E0536105500AAC63/datafile/data_D-WI93OP21_I-1717445842_TS-SYSAUX_FNO-4_q92k3val

2 /oradata/PROD1MT101CL/C24DD111D3215498E0536105500AAC63/datafile/data_D-WI93OP21_I-1717445842_TS-SYSTEM_FNO-2_p32k3v89

2 /oradata/PROD1MT101CL/C24DD111D3215498E0536105500AAC63/datafile/data_D-WI93OP21_I-1717445842_TS-UNDOTBS1_FNO-6_q22k3vag

3 /nfs/pdb/PROD1MT101CL/CC1BE00B92E672FFE053F905500A1BAE/datafile/o1_mf_audit_tb__0fbbwglb_.dbf

4 /nfs/pdb/PROD1MT101CL/C2811CAFC29D1DC9E053F905500A7EC9/datafile/o1_mf_audit_tb__0fk2wkxy_.dbf

4 /nfs/pdb/PROD1MT101CL/C2811CAFC29D1DC9E053F905500A7EC9/datafile/o1_mf_users__0fj4hm5h_.dbf

5 /nfs/pdb/PROD1MT101CL/CF6A94E61EF92940E053F905500AFB8E/datafile/o1_mf_audit_tb__0fsslsvv_.dbf

5 /nfs/pdb/PROD1MT101CL/CF6A94E61EF92940E053F905500AFB8E/datafile/o1_mf_users__0frm1yg5_.dbf

6 /nfs/pdb/PROD1MT101CL/CF6A94E61F3E2940E053F905500AFB8E/datafile/o1_mf_audit_tb__0fz8nq60_.dbf

6 /nfs/pdb/PROD1MT101CL/CF6A94E61F3E2940E053F905500AFB8E/datafile/o1_mf_users__0fy4j81x_.dbf

7 /nfs/pdb/PROD1MT101CL/CF6A94E61F832940E053F905500AFB8E/datafile/o1_mf_audit_tb__0f46dfvs_.dbf

7 /nfs/pdb/PROD1MT101CL/CF6A94E61F832940E053F905500AFB8E/datafile/o1_mf_users__0f3cn007_.dbf

8 /nfs/pdb/PROD1MT101CL/CF6A94E61FC82940E053F905500AFB8E/datafile/o1_mf_audit_tb__0g5h842b_.dbf

8 /nfs/pdb/PROD1MT101CL/CF6A94E61FC82940E053F905500AFB8E/datafile/o1_mf_users__0g2v64l9_.dbf

9 /nfs/pdb/PROD1MT101CL/05126406D61853B3E0635005500AA12B/datafile/o1_mf_audit_tb__0g9rsqpo_.dbf

9 /nfs/pdb/PROD1MT101CL/05126406D61853B3E0635005500AA12B/datafile/o1_mf_inmemory__0g8yrdvh_.dbf

# Tempfiles

CON_ID NAME

---------- -------------------------------------------------------------------------------------------------

1 /oradata/PROD1MT101CL/datafile/o1_mf_temp__0d35c01p_.tmp

2 /oradata/PROD1MT101CL/C24DD111D3215498E0536105500AAC63/datafile/o1_mf_temp__0d36q7dx_.tmp

3 /nfs/pdb/PROD1MT101CL/CC1BE00B92E672FFE053F905500A1BAE/datafile/o1_mf_temp__0gr02w4t_.tmp

3 /nfs/pdb/PROD1MT101CL/CC1BE00B92E672FFE053F905500A1BAE/datafile/o1_mf_temp__0gr0j0wr_.tmp

3 /nfs/pdb/PROD1MT101CL/CC1BE00B92E672FFE053F905500A1BAE/datafile/o1_mf_temp_dba__0gqzydts_.tmp

4 /nfs/pdb/PROD1MT101CL/C2811CAFC29D1DC9E053F905500A7EC9/datafile/o1_mf_temp__0grhwybg_.tmp

4 /nfs/pdb/PROD1MT101CL/C2811CAFC29D1DC9E053F905500A7EC9/datafile/o1_mf_temp__0grjcvk4_.tmp

4 /nfs/pdb/PROD1MT101CL/C2811CAFC29D1DC9E053F905500A7EC9/datafile/o1_mf_temp_dba__0h6slfch_.tmp

5 /nfs/pdb/PROD1MT101CL/CF6A94E61EF92940E053F905500AFB8E/datafile/o1_mf_temp__0gokwd9n_.tmp

5 /nfs/pdb/PROD1MT101CL/CF6A94E61EF92940E053F905500AFB8E/datafile/o1_mf_temp__0gol9jc6_.tmp

5 /nfs/pdb/PROD1MT101CL/CF6A94E61EF92940E053F905500AFB8E/datafile/o1_mf_temp_dba__0gokr9ng_.tmp

6 /nfs/pdb/PROD1MT101CL/CF6A94E61F3E2940E053F905500AFB8E/datafile/o1_mf_temp__0gnrh7x8_.tmp

6 /nfs/pdb/PROD1MT101CL/CF6A94E61F3E2940E053F905500AFB8E/datafile/o1_mf_temp__0gnrw0dj_.tmp

6 /nfs/pdb/PROD1MT101CL/CF6A94E61F3E2940E053F905500AFB8E/datafile/o1_mf_temp_dba__0gnrb2gg_.tmp

7 /nfs/pdb/PROD1MT101CL/CF6A94E61F832940E053F905500AFB8E/datafile/o1_mf_temp__0gmw72co_.tmp

7 /nfs/pdb/PROD1MT101CL/CF6A94E61F832940E053F905500AFB8E/datafile/o1_mf_temp__0gmwnqsp_.tmp

7 /nfs/pdb/PROD1MT101CL/CF6A94E61F832940E053F905500AFB8E/datafile/o1_mf_temp_dba__0gmw1xm3_.tmp

8 /nfs/pdb/PROD1MT101CL/CF6A94E61FC82940E053F905500AFB8E/datafile/o1_mf_temp__0gm118wt_.tmp

8 /nfs/pdb/PROD1MT101CL/CF6A94E61FC82940E053F905500AFB8E/datafile/o1_mf_temp__0gm1dv5n_.tmp

8 /nfs/pdb/PROD1MT101CL/CF6A94E61FC82940E053F905500AFB8E/datafile/o1_mf_temp_dba__0gm0wzcc_.tmp

9 /nfs/pdb/PROD1MT101CL/05126406D61853B3E0635005500AA12B/datafile/o1_mf_temp__0gj66tgb_.tmp

9 /nfs/pdb/PROD1MT101CL/05126406D61853B3E0635005500AA12B/datafile/o1_mf_temp_dba__0gh7wbpf_.tmp

# Logs

MEMBER

--------------------------------------------------------

/oradata/PROD1MT101CL/onlinelog/o1_mf_1__0b0frl6b_.log

/oradata/PROD1MT101CL/onlinelog/o1_mf_2__0b1qco5j_.log

/oradata/PROD1MT101CL/onlinelog/o1_mf_3__0b2bd0od_.log

/oradata/PROD1MT101CL/onlinelog/o1_mf_4__0b2z3qvo_.log

/oradata/PROD1MT101CL/onlinelog/o1_mf_5__0b3tkddo_.log

/oradata/PROD1MT101CL/onlinelog/o1_mf_6__0b4gvg2h_.log

/oradata/PROD1MT101CL/onlinelog/o1_mf_7__0b51zbqp_.logCleaning

# Convert the special Data Guard standby database to a snapshot standby

DGMGRL> convert database prod1mt101cl to snapshot standby;

# Now run all your cleaning scripts on this special database

SQL> @all_of_my_special_cleaning_scripts

# Cleaning is now finished successfully

# Close and reopen each PDB in READ ONLY mode

SQL> alter pluggable database all close;

SQL> alter pluggable database all open read only;

# Create XML metadata file for each PDB

SQL> exec DBMS_PDB.DESCRIBE('/nfs/pdb/apppdb1.xml','APPPDB1');

SQL> exec DBMS_PDB.DESCRIBE('/nfs/pdb/apppdb2.xml','APPPDB2');

SQL> exec DBMS_PDB.DESCRIBE('/nfs/pdb/apppdb3.xml','APPPDB3');

# Shut down the cleaning database

SQL> shutdown immediateSnapshot

Now use your NAS toolkit (REST API for example) to create a storage level snapshot from the /nfs/pdb filesystem.

Convert Snapshot Standby back to physical standby

Use the instructions in this post to restore the cleaning instance back to being a physical standby.

Attach PDBs to test database CDB

First, use your NAS toolkit to create a thin writable from from the snapshot created earlier (or multiple clones if the test setup has a Data Guard standby, a dedicated copy for each) and mount them to the test database hosts as /nfs/newclone.

# If the test setup has Data Guard, set standby_pdb_source_file_directory

alter system set standby_pdb_source_file_directory='/nfs/newclone/PROD1MT101CL/CC1BE00B92E672FFE053F905500A1BAE/datafile/' scope=memory;

# On test primary create new pluggable database as clone

create pluggable database apppdb1_newclone as clone using '/nfs/newclone/apppdb1.xml' source_file_directory='/nfs/newclone/PROD1MT101CL/CC1BE00B92E672FFE053F905500A1BAE/datafile/' nocopy tempfile reuse;

# Open it

alter pluggable database apppdb1_newclone;Done.

Categories

- Blog entry

(103)

(103)

- Event

(5)

(5)