Tag: terraform

- Written by: ilmarkerm

- Category: Blog entry

- Published: October 2, 2024

In the previous post I looked how to create Oracle database home in OCI, using custom patches. Here I create a Oracle Base database on top of that custom home.

I was struggling for days with this, mostly because I first wanted to create database on LVM storage, but after running for many many hours, the provisioning got stuck always at 42%, without any error messages. Finally, with tons of experimentation, I found out that if I replace LVM with ASM storage, it all just works.

# List all availability domainsdata "oci_identity_availability_domains" "ads" {

compartment_id = oci_identity_compartment.compartment.id

}

# Create the Oracle Base database

resource "oci_database_db_system" "test23ai" {

# Waits until DB system is provisioned

# It can take a few hours

availability_domain = data.oci_identity_availability_domains.ads.availability_domains[0].name

compartment_id = oci_identity_compartment.compartment.id

db_home {

database {

admin_password = "correct#HorseBatt5eryS1-_ple"

character_set = "AL32UTF8"

#database_software_image_id = oci_database_database_software_image.db_23051.id

db_name = "test23ai"

pdb_name = "PDB1"

db_backup_config {

auto_backup_enabled = false

}

}

db_version = oci_database_database_software_image.db_23051.patch_set

database_software_image_id = oci_database_database_software_image.db_23051.id

}

hostname = "test23ai1"

shape = "VM.Standard.E5.Flex"

ssh_public_keys = ["ssh-rsa AAAAB3NzaC1yc2EAAA paste your own ssh public key here"]

subnet_id = oci_core_subnet.subnet.id

cpu_core_count = 2

data_storage_size_in_gb = 256

database_edition = "ENTERPRISE_EDITION"

db_system_options {

storage_management = "ASM" # LVM did not work for me, provisioning stuck at 42% for many many hours until it times out

}

display_name = "Test 23ai"

domain = "dev.se1"

#fault_domains = var.db_system_fault_domains

license_model = "LICENSE_INCLUDED"

node_count = 1

# Network Security Groups

#nsg_ids = var.db_system_nsg_ids

source = "NONE"

storage_volume_performance_mode = "BALANCED"

time_zone = "UTC"

}

And to see the connection options, they are visible from terraform state. Here we see database connection information, donnection strings in different formats are visible – so your terraform code could now take these values and store it in some service discovery/parameter store.

ilmar_kerm@codeeditor:oci-terraform-example (eu-stockholm-1)$ terraform state show oci_database_db_system.test23ai

# oci_database_db_system.test23ai:

resource "oci_database_db_system" "test23ai" {

availability_domain = "AfjF:EU-STOCKHOLM-1-AD-1"

compartment_id = "ocid1.compartment.oc1..aaaa"

cpu_core_count = 2

data_storage_percentage = 80

data_storage_size_in_gb = 256

database_edition = "ENTERPRISE_EDITION"

defined_tags = {

"Oracle-Tags.CreatedBy" = "default/ilmar.kerm@gmail.com"

"Oracle-Tags.CreatedOn" = "2024-10-02T14:57:03.606Z"

}

disk_redundancy = "HIGH"

display_name = "Test 23ai"

domain = "dev.se1"

fault_domains = [

"FAULT-DOMAIN-2",

]

freeform_tags = {}

hostname = "test23ai1"

id = "ocid1.dbsystem.oc1.eu-stockholm-1.anqxeljr4ebxpbqanr42p2zebku5hdk5nci2"

iorm_config_cache = []

license_model = "LICENSE_INCLUDED"

listener_port = 1521

maintenance_window = []

memory_size_in_gbs = 32

node_count = 1

reco_storage_size_in_gb = 256

scan_ip_ids = []

shape = "VM.Standard.E5.Flex"

source = "NONE"

ssh_public_keys = [

"ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAABgQCvccb2GOc+VU6V0lw367a5sgKqn0epAok9vCVboK6WvQid6byo7hkWUSixIuB6ZPGG89n3ig4r my ssh public key",

]

state = "AVAILABLE"

storage_volume_performance_mode = "BALANCED"

subnet_id = "ocid1.subnet.oc1.eu-stockholm-1.aaaaaaaal6ru"

time_created = "2024-10-02 14:57:04.037 +0000 UTC"

time_zone = "UTC"

version = "23.5.0.24.07"

vip_ids = []

data_collection_options {

is_diagnostics_events_enabled = false

is_health_monitoring_enabled = false

is_incident_logs_enabled = false

}

db_home {

create_async = false

database_software_image_id = "ocid1.databasesoftwareimage.oc1.eu-stockholm-1.anqxeljr4ebxpbqa3h6dawz"

db_home_location = "/u01/app/oracle/product/23.0.0.0/dbhome_1"

db_version = "23.5.0.24.07"

defined_tags = {}

display_name = "dbhome20241002145704"

freeform_tags = {}

id = "ocid1.dbhome.oc1.eu-stockholm-1.anqxeljrb3hetziaby6hwudav"

state = "AVAILABLE"

time_created = "2024-10-02 14:57:04.042 +0000 UTC"

database {

admin_password = (sensitive value)

character_set = "AL32UTF8"

connection_strings = [

{

all_connection_strings = {

"cdbDefault" = "test23ai1.dev.se1:1521/test23ai_rf6_arn.dev.se1"

"cdbIpDefault" = "(DESCRIPTION=(CONNECT_TIMEOUT=5)(TRANSPORT_CONNECT_TIMEOUT=3)(RETRY_COUNT=3)(ADDRESS_LIST=(LOAD_BALANCE=on)(ADDRESS=(PROTOCOL=TCP)(HOST=10.1.2.80)(PORT=1521)))(CONNECT_DATA=(SERVICE_NAME=test23ai_rf6_arn.dev.se1)))"

}

cdb_default = "test23ai1.dev.se1:1521/test23ai_rf6_arn.dev.se1"

cdb_ip_default = "(DESCRIPTION=(CONNECT_TIMEOUT=5)(TRANSPORT_CONNECT_TIMEOUT=3)(RETRY_COUNT=3)(ADDRESS_LIST=(LOAD_BALANCE=on)(ADDRESS=(PROTOCOL=TCP)(HOST=10.1.2.80)(PORT=1521)))(CONNECT_DATA=(SERVICE_NAME=test23ai_rf6_arn.dev.se1)))"

},

]

database_software_image_id = "ocid1.databasesoftwareimage.oc1.eu-stockholm-1.anqxeljr4ebxpbqa3h6"

db_name = "test23ai"

db_unique_name = "test23ai_rf6_arn"

db_workload = "OLTP"

defined_tags = {

"Oracle-Tags.CreatedBy" = "default/ilmar.kerm@gmail.com"

"Oracle-Tags.CreatedOn" = "2024-10-02T14:57:03.740Z"

}

freeform_tags = {}

id = "ocid1.database.oc1.eu-stockholm-1.anqxeljr4ebxpbqac3v4lrr"

ncharacter_set = "AL16UTF16"

pdb_name = "PDB1"

pluggable_databases = []

state = "AVAILABLE"

time_created = "2024-10-02 14:57:04.043 +0000 UTC"

db_backup_config {

auto_backup_enabled = false

auto_full_backup_day = "SUNDAY"

recovery_window_in_days = 0

run_immediate_full_backup = false

}

}

}

db_system_options {

storage_management = "ASM"

}

}- Written by: ilmarkerm

- Category: Blog entry

- Published: September 29, 2024

I’ll continue exploring using OCI services with Terraform. Now it is time to start looking into databases. High Oracle PM-s have been lobbying for a database image creation service, where you just supply patch numbers and Oracle will return you the fully built database home. I see that this service is now available in the cloud (for cloud databases only).

I’ll try it out, using terraform.

resource "oci_database_database_software_image" "db_23051" {

# NB! Waits until image is provisioned

# This took 10m47s to provision

compartment_id = oci_identity_compartment.compartment.id

display_name = "23-db-23051"

image_shape_family = "VM_BM_SHAPE" # For use in Database Base service

# oci db version list

# NB! 23.0.0.0 seems to be behind on patches, 23.0.0.0.0 seems to be current

database_version = "23.0.0.0.0"

image_type = "DATABASE_IMAGE"

# Can't find how to query that list - but the format seems quite self-explanatory

# Exadata Cloud Service Software Versions (Doc ID 2333222.1)

patch_set = "23.5.0.24.07"

}I had hard time finding out the allowed values for parameter patch_set, but they seem to be described in Doc ID 2333222.1 (and what the contents are).

Examining the state of the created resource

ilmar_kerm@codeeditor:oci-terraform-example (eu-stockholm-1)$ terraform state show oci_database_database_software_image.db_23051

# oci_database_database_software_image.db_23051:

resource "oci_database_database_software_image" "db_23051" {

compartment_id = "ocid1.compartment.oc1..aaaaaaaasbzzr7i54kpv6oc5s7i23isiij6n2tyentd5udc34ptzagovrgqa"

database_software_image_included_patches = [

"35221462",

"36741532",

"36744688",

]

database_software_image_one_off_patches = [

"35221462",

"36741532",

"36744688",

]

database_version = "23.0.0.0.0"

defined_tags = {

"Oracle-Tags.CreatedBy" = "default/ilmar.kerm@gmail.com"

"Oracle-Tags.CreatedOn" = "2024-09-29T12:36:02.119Z"

}

display_name = "23-db-23051"

freeform_tags = {}

id = "ocid1.databasesoftwareimage.oc1.eu-stockholm-1.anqxeljr4ebxpbqadhgioquzxv6qtrui72e3sn3c7iwxcljncmdq7fx5jdbq"

image_shape_family = "VM_BM_SHAPE"

image_type = "DATABASE_IMAGE"

is_upgrade_supported = false

patch_set = "23.5.0.24.07"

state = "AVAILABLE"

time_created = "2024-09-29 12:36:02.123 +0000 UTC"

}One thing I notice here (verified with testing), that the parameter database_software_image_one_off_patches gets pre-populated with included patches after the image is created – so you have to include the included patches also the the parameter value.

With 19c version process is similar

resource "oci_database_database_software_image" "db_19241" {

# NB! Waits until image is provisioned

# This took 16m4s to provision

compartment_id = oci_identity_compartment.compartment.id

display_name = "19-db-19241"

image_shape_family = "VM_BM_SHAPE" # For use in Database Base service

# oci db version list

database_version = "19.0.0.0"

image_type = "DATABASE_IMAGE"

patch_set = "19.24.0.0"

}I did try to apply MRP on top of it, but maybe the cloud patch numbers are different, since the usual MRP patch number did not apply on top of it.

In the next post I’ll try to spin up an actual database using the image.

- Written by: ilmarkerm

- Category: Blog entry

- Published: April 14, 2024

Here I’m exploring how to control the basic network level resource security accesses. In AWS there is a concept called Security Groups. In OCI Oracle Cloud the similar concept is called Network Security Groups, also there is a little bit less powerful concept called Security Lists. A good imprevement with Network Security Groups over Security Lists is that in rules you can refer to other NSGs, not only CIDR.

Below I create two NSG – one for databases and one for application servers, and allow unrestricted outgoing traffc from them both.

# security.tf

# Rules for appservers

resource "oci_core_network_security_group" "appserver" {

compartment_id = oci_identity_compartment.compartment.id

vcn_id = oci_core_vcn.main.id

display_name = "Application servers"

}

resource "oci_core_network_security_group_security_rule" "appserver_egress" {

network_security_group_id = oci_core_network_security_group.appserver.id

direction = "EGRESS"

protocol = "all"

description = "Allow all Egress traffic"

destination = "0.0.0.0/0"

destination_type = "CIDR_BLOCK"

}

# Rules for databases

resource "oci_core_network_security_group" "db" {

compartment_id = oci_identity_compartment.compartment.id

vcn_id = oci_core_vcn.main.id

display_name = "Databases"

}

resource "oci_core_network_security_group_security_rule" "db_egress" {

network_security_group_id = oci_core_network_security_group.db.id

direction = "EGRESS"

protocol = "all"

description = "Allow all Egress traffic"

destination = "0.0.0.0/0"

destination_type = "CIDR_BLOCK"

}Some rule examples to allow traffic from appservers towards databases. Here referring to the appserver NSG as source – not a CIDR.

# This rule allows port 1521/tcp to be accessed from NSG "appserver" created earlier

resource "oci_core_network_security_group_security_rule" "db_appserver_oracle" {

network_security_group_id = oci_core_network_security_group.db.id

direction = "INGRESS"

protocol = "6" # TCP

description = "Allow ingress from application servers to 1521/tcp"

source_type = "NETWORK_SECURITY_GROUP"

source = oci_core_network_security_group.appserver.id

tcp_options {

destination_port_range {

min = 1521

max = 1521

}

}

}

# This rule allows port 5432/tcp to be accessed from NSG "appserver" created earlier

resource "oci_core_network_security_group_security_rule" "db_appserver_postgres" {

network_security_group_id = oci_core_network_security_group.db.id

direction = "INGRESS"

protocol = "6" # TCP

description = "Allow ingress from application servers to 5432/tcp"

source_type = "NETWORK_SECURITY_GROUP"

source = oci_core_network_security_group.appserver.id

tcp_options {

destination_port_range {

min = 5432

max = 5432

}

}

}And one example rule for appserver group, here I just want to show that the source NSG can refer to itself – so the port is open only to resources placed in the same NSG.

# This rule allows port 80/tcp to be accessed from the NSG itself

# Example use - the application is running unencrypted HTTP and is expected to have a loadbalancer in front, that does the encryption. In this case loadbalancer could be put to the same NSG.

# Or if the different application servers need to have a backbone communication port between each other - like cluster interconnect

resource "oci_core_network_security_group_security_rule" "appserver_http" {

network_security_group_id = oci_core_network_security_group.appserver.id

direction = "INGRESS"

protocol = "6" # TCP

description = "Allow access port port 80/tcp only from current NSG (self)"

source_type = "NETWORK_SECURITY_GROUP"

source = oci_core_network_security_group.appserver.id

tcp_options {

destination_port_range {

min = 80

max = 80

}

}

}Now, network security groups need to be attached to the resources they are intended to protect. NSG-s are attached to the virtual network adapers VNICs.

To attach NSG to my previously created compute instance, I have to go back and edit the compute instance declaration to attach a NSG to the primary VNIC of that instance.

# compute.tf

resource "oci_core_instance" "arm_instance" {

compartment_id = oci_identity_compartment.compartment.id

# oci iam availability-domain list

availability_domain = "MpAX:EU-STOCKHOLM-1-AD-1"

# oci compute shape list --compartment-id

shape = "VM.Standard.A1.Flex" # ARM based shape

shape_config {

# How many CPUs and memory

ocpus = 2

memory_in_gbs = 4

}

display_name = "test-arm-1"

source_details {

# The source operating system image

# oci compute image list --all --output table --compartment-id

source_id = data.oci_core_images.oel.images[0].id

source_type = "image"

}

create_vnic_details {

# Network details

subnet_id = oci_core_subnet.subnet.id

assign_public_ip = true

# attaching Network Security Groups - NSGs

nsg_ids = [oci_core_network_security_group.appserver.id]

}

# CloudInit metadata - including my public SSH key

metadata = {

ssh_authorized_keys = "ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAABgQCZ4bqPK+Mwiy+HLabqJxCMcQ/hY7IPx/oEQZWZq7krJxkLLUI6lkw44XRCutgww1q91yTdsSUNDZ9jFz9LihGTEIu7CGKkzmoGtAWHwq2W38GuA5Fqr0r2vPH1qwkTiuN+VmeKJ+qzOfm9Lh1zjD5e4XndjxiaOrw0wI19zpWlUnEqTTjgs7jz9X7JrHRaimzS3PEF5GGrT6oy6gWoKiWSjrQA2VGWI0yNQpUBFTYWsKSHtR+oJHf2rM3LLyzKcEXnlUUJrjDqNsbbcCN26vIdCGIQTvSjyLj6SY+wYWJEHCgPSbBRUcCEcwp+bATDQNm9L4tI7ZON5ZiJstL/sqIBBXmqruh7nSkWAYQK/H6PUTMQrUU5iK8fSWgS+CB8CiaA8zos9mdMfs1+9UKz0vMDV7PFsb7euunS+DiS5iyz6dAz/uFexDbQXPCbx9Vs7TbBW2iPtYc6SNMqFJD3E7sb1SIHhcpUvdLdctLKfnl6cvTz2o2VfHQLod+mtOq845s= ilmars_public_key"

}

}- Written by: ilmarkerm

- Category: Blog entry

- Published: April 7, 2024

Continusing to build Oracle Cloud Infrastructure with Terraform. Today moving on to compute instances.

But first some networking, the VCN I created earlier did not have access to the internet. Lets fix it now. The code below will add an Internet Gateway and modify the default route table to send out the network traffic via the Internet Gateway.

# network.tf

resource "oci_core_internet_gateway" "internet_gateway" {

compartment_id = oci_identity_compartment.compartment.id

vcn_id = oci_core_vcn.main.id

# Internet Gateway cannot be associated with Route Table here, otherwise adding a route table rule will error with - Rules in the route table must use private IP as a target.

#route_table_id = oci_core_vcn.main.default_route_table_id

}

resource "oci_core_default_route_table" "default_route_table" {

manage_default_resource_id = oci_core_vcn.main.default_route_table_id

compartment_id = oci_identity_compartment.compartment.id

display_name = "Default Route Table for VCN"

route_rules {

network_entity_id = oci_core_internet_gateway.internet_gateway.id

destination = "0.0.0.0/0"

destination_type = "CIDR_BLOCK"

}

}Moving on to the compute instance itself. First question is – what operating system should it run – what is the source image. There is a data source for this. Here I select the latest Oracle Linux 9 image for ARM.

data "oci_core_images" "oel" {

compartment_id = oci_identity_compartment.compartment.id

operating_system = "Oracle Linux"

operating_system_version = "9"

shape = "VM.Standard.A1.Flex"

state = "AVAILABLE"

sort_by = "TIMECREATED"

sort_order = "DESC"

}

# Output the list for debugging

output "images" {

value = data.oci_core_images.oel

}We are now ready to create the compute instance itself. In the metadata I provide my SSH public key, so I could SSH into the server.

resource "oci_core_instance" "arm_instance" {

compartment_id = oci_identity_compartment.compartment.id

# oci iam availability-domain list

availability_domain = "MpAX:EU-STOCKHOLM-1-AD-1"

# oci compute shape list --compartment-id

shape = "VM.Standard.A1.Flex" # ARM based shape

shape_config {

# How many CPUs and memory

ocpus = 2

memory_in_gbs = 4

}

display_name = "test-arm-1"

source_details {

# The source operating system image

# oci compute image list --all --output table --compartment-id

source_id = data.oci_core_images.oel.images[0].id

source_type = "image"

}

create_vnic_details {

# Network details

subnet_id = oci_core_subnet.subnet.id

assign_public_ip = true

}

# CloudInit metadata - including my public SSH key

metadata = {

ssh_authorized_keys = "ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAABgQCZ4bqPK+Mwiy+HLabqJxCMcQ/hY7IPx/oEQZWZq7krJxkLLUI6lkw44XRCutgww1q91yTdsSUNDZ9jFz9LihGTEIu7CGKkzmoGtAWHwq2W38GuA5Fqr0r2vPH1qwkTiuN+VmeKJ+qzOfm9Lh1zjD5e4XndjxiaOrw0wI19zpWlUnEqTTjgs7jz9X7JrHRaimzS3PEF5GGrT6oy6gWoKiWSjrQA2VGWI0yNQpUBFTYWsKSHtR+oJHf2rM3LLyzKcEXnlUUJrjDqNsbbcCN26vIdCGIQTvSjyLj6SY+wYWJEHCgPSbBRUcCEcwp+bATDQNm9L4tI7ZON5ZiJstL/sqIBBXmqruh7nSkWAYQK/H6PUTMQrUU5iK8fSWgS+CB8CiaA8zos9mdMfs1+9UKz0vMDV7PFsb7euunS+DiS5iyz6dAz/uFexDbQXPCbx9Vs7TbBW2iPtYc6SNMqFJD3E7sb1SIHhcpUvdLdctLKfnl6cvTz2o2VfHQLod+mtOq845s= ilmars_public_key"

}

}And attach the block storage volumes I created in the previous post. Here I create attachments as paravirtualised, meaning the volumes appear on server as sd* devices, but also iSCSI is possible.

resource "oci_core_volume_attachment" "test_volume_attachment" {

attachment_type = "paravirtualized"

instance_id = oci_core_instance.arm_instance.id

volume_id = oci_core_volume.test_volume.id

# Interesting options, could be useful in some cases

is_pv_encryption_in_transit_enabled = false

is_read_only = false

is_shareable = false

}

resource "oci_core_volume_attachment" "silver_test_volume_attachment" {

# This is to enforce device attachment ordering

depends_on = [oci_core_volume_attachment.test_volume_attachment]

attachment_type = "paravirtualized"

instance_id = oci_core_instance.arm_instance.id

volume_id = oci_core_volume.silver_test_volume.id

# Interesting options, could be useful in some cases

is_pv_encryption_in_transit_enabled = false

is_read_only = true

is_shareable = false

}Looks like OCI support some interesting options for attaching volumes, like encryption, read only and shareable. I can see them being useful in the future. If I log into the created server, the attached devices are created as sdb and sdc – where sdc was instructed to be read only. And indeed it is.

[root@test-arm-1 ~]# lsblk

NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINTS

sda 8:0 0 46.6G 0 disk

├─sda1 8:1 0 100M 0 part /boot/efi

├─sda2 8:2 0 2G 0 part /boot

└─sda3 8:3 0 44.5G 0 part

├─ocivolume-root 252:0 0 29.5G 0 lvm /

└─ocivolume-oled 252:1 0 15G 0 lvm /var/oled

sdb 8:16 0 50G 0 disk

sdc 8:32 0 50G 1 disk

[root@test-arm-1 ~]# dd if=/dev/zero of=/dev/sdb bs=1M count=10

10+0 records in

10+0 records out

10485760 bytes (10 MB, 10 MiB) copied, 0.0453839 s, 231 MB/s

[root@test-arm-1 ~]# dd if=/dev/zero of=/dev/sdc bs=1M count=10

dd: failed to open '/dev/sdc': Read-only file system- Written by: ilmarkerm

- Category: Blog entry

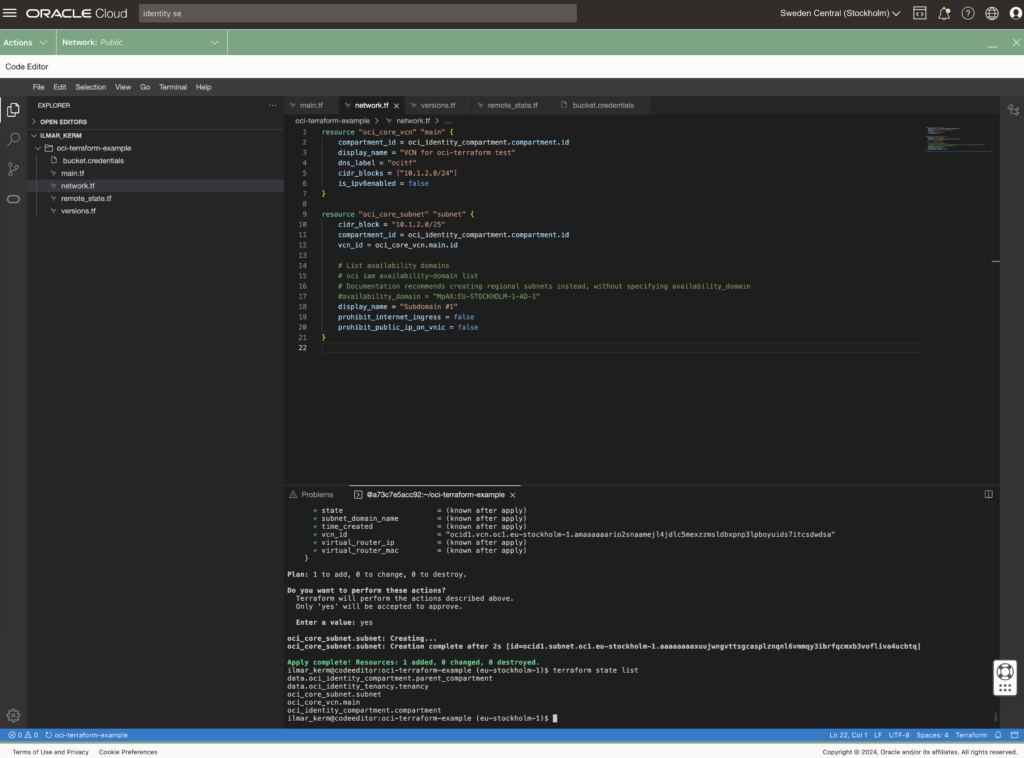

- Published: March 24, 2024

I thought I’ll start exploring Oracle Cloud offerings a little and try building something with Terraform.

The execution environment

OCI Could Console offers Cloud Shell and Code Editor right from the browser. Cloud Shell is a small Oracle Linux container with shell access, that has the most popular cloud tools and OCI SDKs already deployed. Most importantly, however, all Oracle Cloud API commands you execute from there, they run silently as yourself, no additional setup required. Including setting up terraform. Pretty awesome idea I would say – no need to set up any admin computer first.

Since I would mainly write code, I’m going to use only only Code Editor (which is actually VS Code in your browser) and VS Code also has a built in terminal for executing commands.

Read about executing and using Cloud Shell here.

Setting up Terraform provider

When executing from Cloud Shell / Code Editor, then setting up the terraform provider is very simple.

# versions.tf

provider "oci" {

region = "eu-stockholm-1"

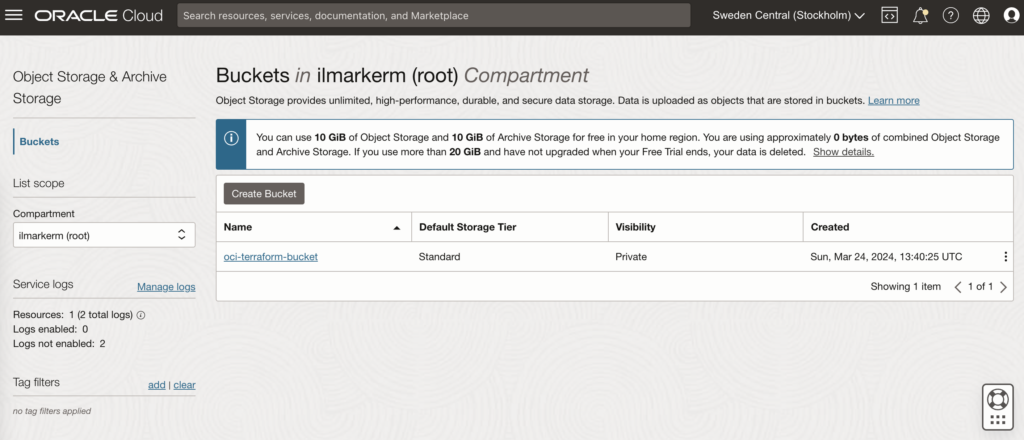

}It is very good practice to also place terraform state file in the shared object store. OCI also provides an object store and to set it up first create a Bucket in Object Storage.

This also requires setting up Customer Secret Keys, for accessing the bucket using S3 protocol. I’m going to save my access key and secret access key in a file named bucket.credentials.

# bucket.credentials

[default]

aws_access_key_id=here is your access key

aws_secret_access_key=here is your secret access key

# remote_state.tf

terraform {

backend "s3" {

bucket = "oci-terraform-bucket"

key = "oci-terraform.tfstate"

region = "eu-stockholm-1"

# ax9u97qgbo5h is the namespace of the bucket, it is shown in the Bucket Details page

endpoint = "https://ax9u97qgbo5h.compat.objectstorage.eu-stockholm-1.oraclecloud.com"

shared_credentials_file = "bucket.credentials"

skip_region_validation = true

skip_credentials_validation = true

skip_metadata_api_check = true

force_path_style = true

}

}Creating compartment and basic networking

Compartment is just a handy hierarchical logical container which helps to organise your Oracle Cloud resources better. It can also be used to set common tags for all resources created under it.

# main.tf

locals {

tenancy_id = "ocid1.tenancy.oc1..aaaaaaaawf2fv3ipfdp564ffiqpfqr6u6n3uofydgtihq3wget5357lq5i6a"

environment = "dev"

}

# Information about current tenancy, for example home region

data "oci_identity_tenancy" "tenancy" {

tenancy_id = local.tenancy_id

}

# Get the parent compartment as a terraform object

data "oci_identity_compartment" "parent_compartment" {

# Top get list of existing compartments execute:

# oci iam compartment list

id = data.oci_identity_tenancy.tenancy.id

}

# Create compartment

resource "oci_identity_compartment" "compartment" {

# Compartment_id must be the parent compartment ID and it is required

compartment_id = data.oci_identity_compartment.parent_compartment.id

description = "oci-terraform experiments"

name = "oci-terraform-experiments"

# Define some default tags that are added to all resources created under this compartment

freeform_tags = {

"deployed_by" = "terraform"

"environment" = local.environment

}

}To set up networking, first you need VCN Virtual Cloud Network and under it subnets.

# network.tf

resource "oci_core_vcn" "main" {

compartment_id = oci_identity_compartment.compartment.id

display_name = "VCN for oci-terraform test"

dns_label = "ocitf"

cidr_blocks = ["10.1.2.0/24"]

is_ipv6enabled = false

}

resource "oci_core_subnet" "subnet" {

cidr_block = "10.1.2.0/25"

compartment_id = oci_identity_compartment.compartment.id

vcn_id = oci_core_vcn.main.id

# List availability domains

# oci iam availability-domain list

# Documentation recommends creating regional subnets instead, without specifying availability_domain

#availability_domain = "MpAX:EU-STOCKHOLM-1-AD-1"

display_name = "Subdomain #1"

prohibit_internet_ingress = false

prohibit_public_ip_on_vnic = false

}

To be continued

I don’t really know where this post series is going. I’ve done quite a bit of Terraforming in AWS, so here I’m just exporing what Oracle Cloud has to offer and instead of using the dreaded ClickOps, I’ll try to be proper with Terraform.

At the end of the post I have these resources created.