Author: ilmarkerm

- Written by: ilmarkerm

- Category: Blog entry

- Published: April 11, 2026

I currently have a customer who wishes to move their internal APEX application into a cloud based hosting, with quite tight budget constraints – and at the same time still need to treat it as production service.

After comparing many options, none which were perfect, the option that we are going for is Oracle Autonomous database APEX workload type. It is significantly cheaper than the full blown Autonomous Database, but it does come with its own quite unique challenges that we need to overcome. APEX service limitations are documented here

The main limitation – there is no connectivity via SQL*Net, all interactions must be done over HTTPS. Everything, including development of database application and deployments.

Side-effect of this restriction is also that there is no-way to have a custom URL (vanity URL) for your application that would also support APEX social login feature (OAuth/OpenID). To overcome that limitation in a full Autonomous Database installation, people usually deploy their own ORDS in front of the Oracle cloud database service. This solution is not usable here.

I will write a few blog posts, on how to work within the restrictions of the APEX workload type database and still keep most of the developer comforts.

Connecting to the database

To work with the database schema you are mainly expected to use the built in APEX SQL workspace and Database Actions SQL Developer web. Mostly enough, but I think we all prefer using our own preferred database clients. Also it is not possible to automate tasks and deploys via the web page.

A few years ago Oracle introduced REST JDBC driver that talks to the database over ORDS SQL endpoint, via HTTP protocol. This ORDS endpoint is accessible, so lets try using it.

The REST JDBC driver does come with restrictions, the main one being that the driver is stateless and does not support transactions. You can read about the driver and its restrictions here

Create ORDS enabled schema

Open Autonomous AI Database and go to Tool configuration. There you see Web access (ORDS) and open its Public access URL. Open SQL Developer web and log in as ADMIN.

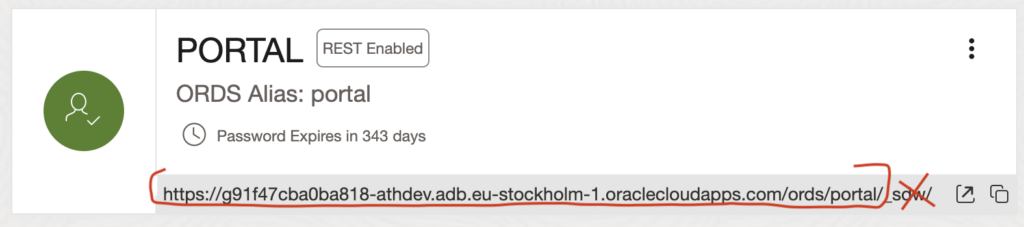

Open Administration > Database users and enable schema you want to log in as REST enabled. And copy the REST URL for this schema, minus the last _sdw/ part.

SQLcl

It is possible to connect to the ORDS SQL endpoint using SQLcl, Jeff Smith blogged about it here. Although not all SQLcl functions are available. For example Liquibase and connection manager are not available.

Here I’m using SQLcl 26.1.

- Start SQLcl with /nolog flag

- CONNECT schemaname@RESTUrl

- Or CONNECT schemaname/password@RESTUrl

ilmarkerm@Ilmars-iMac bin % ./sql /nolog

SQLcl: Release 26.1 Production on Sat Apr 11 09:21:40 2026

Copyright (c) 1982, 2026, Oracle. All rights reserved.

SQL> connect portal@https://g91f47cba0ba818-athdev.adb.eu-stockholm-1.oraclecloudapps.com/ords/portal/

Password? (**********?) **********************

Connected.

SQL> select user, sys_context('userenv','instance_name');

USER SYS_CONTEXT('USERENV','INSTANCE_NAME')

_________ _________________________________________

PORTAL fcehldyf3I can execute usual SQL commands.

SQL> create table t (id number);

Table T created.

SQL> insert into t (id) values (1);

1 row inserted.

SQL> select * from t;

ID

_____

1

SQL> begin

2 for i in 1..100000 loop

3 insert into t (id) values (i);

4 end loop;

5 end;

6* /

PL/SQL procedure successfully completed.

SQL> select count(*) from t;

COUNT(*)

___________

100001

SQL> truncate table t;

Table T truncated.But the REST driver is stateless, so no transactions (between different calls – inside the same call they work).

SQL> insert into t (id) values (2000000002);

1 row inserted.

SQL> rollback;

Rollback complete.

SQL> select * from t;

ID

_____________

2000000002

SQL> begin

2 insert into t (id) values (30000003);

3 rollback;

4 end;

5* /

PL/SQL procedure successfully completed.

SQL> select * from t;

ID

_____________

2000000002You cannot use SQLcl connection manager for saving the connections.

SQL> conn -save portaldev portal@https://g91f47cba0ba818-athdev.adb.eu-stockholm-1.oraclecloudapps.com/ords/portal/

Connections of type OREST are not supported for saving

Warning: You are no longer connected to ORACLE.Also built in Liquibase commands give a NullPointer.

SQL> lb generate-changelog

Null Pointer please log a bug.

liquibase.exception.CommandExecutionException: Cannot invoke "String.toUpperCase()" because the return value of "java.sql.DatabaseMetaData.getSQLKeywords()" is nullGUI

I haven’t been successful at connecting DBeaver nor SQL Developer using the Oracle REST driver. DBeaver just gives a lot of internal JDBC errors even if I strip it down to bare minimum of supported features.

java.lang.NullPointerException: Cannot invoke "java.sql.Statement.execute(String)" because the return value of "org.jkiss.dbeaver.model.impl.jdbc.exec.JDBCStatementImpl.getOriginal()" is nullI’ll leave it like that.

- Written by: ilmarkerm

- Category: Blog entry

- Published: April 7, 2026

Procedure to renew service TLS certificates usually (always?) is that you first renew the certificate+key files the service is using and then you also need to signal the running service to reload the configuration files (or restart). If you forget the last part, the service would still continue identifying itself with the old certificate – even past the certificate has expired. Very easy to forget the service reload/restart part.

Usually with Linux programs to make services reload their configuration, including TLS certificates, there is an option to send the program SIGHUP Unix signal. But sadly this does not work for MySQL. Unix signals only flush tables, flush cache and rotate log files https://dev.mysql.com/doc/refman/8.4/en/unix-signal-response.html

Also none of the mysqladmin commands like reload, refresh make a running mysqld service to reload the TLS certificate files.

The only way I have found for a running MySQL instance to reload the certificate files is this ALTER INSTANCE command

ALTER INSTANCE RELOAD TLSDon’t forget to add it also to your SystemD service file, if your certificate renewal automation relies on SystemD reload command. Need to add something like this to the service file (don’t forget about authentication).

ExecReload=mysql -e "alter instance reload tls"Quite an unusual behaviour from MySQL, so do not be caught out. When certificated expire clients cannot connect using TLS anymore and connections fail.

This does not apply if you choose to restart MySQL service, but this comes with the penalty of short downtime.

- Written by: ilmarkerm

- Category: Blog entry

- Published: June 19, 2025

If you are using Oracle Linux cloud images available from https://yum.oracle.com/oracle-linux-templates.html and at some point it does not boot up again and you see it is stuck in the bootloader (grub> prompt), then here are some steps to help you boot it up again. I have used them to rescue OL8 systems.

Oracle Support has a note “Oracle Linux: How to Recover a Non-Booting GRUB2 After Kernel Update (Doc ID 2898390.1)” but these steps do not perfectly work for the provided cloud image.

First check what disk devices are available, for the cloud image you should see this

grub> ls

(hd0) (hd0,msdos1) (hd0,msdos2)Then check what Linux kernels you have available on this system. You should see vmlinuz ja initramfs file (files) with the matching version number.

grub> ls (hd0,msdos1)/

vmlinux-5.4.17-2136.342.5.el8uek.x86_64 initramfs-5.4.17-2136.342.5.el8uek.x86_64.imgNow boot it up

insmod lvm

insmod normal

insmod gzio

insmod part_gpt

insmod xfs

set root=(hd0,msdos1)

linux /vmlinux-5.4.17-2136.342.5.el8uek.x86_64 root=/dev/mapper/vg_main-lv_root

initrd /initramfs-5.4.17-2136.342.5.el8uek.x86_64.img

bootAfter that the system should boot up as normal.

The Oracle Support note claims this can happen due to broken grub.cfg file, but at least the cases I’ve seen grub.cfg has been fine and regenerating it, as the support note recommends, had no help. But at least can temporarily boot up the system again.

- Written by: ilmarkerm

- Category: Blog entry

- Published: February 23, 2025

To enable edit mode on Interactive Grid immediately when page loads (to save users a double click), you can create a dynamic action to run custom JavaScript on page load:

- Set Static ID on your Interactive Grid region – lets call it MYIG1

- Create a Dynamic Action on event Page Load and on True event let it execute the following JavaScript Code

apex.region("MYIG1").call("getActions").set("edit", true);Up to this part all is well and if you page is not very log, problem solved. But if the page is longer you will notice a side-effect – the page gets automatically scrolled to set focus on the Interactive Grid. To fix it append a scroll up code to the JavaScript snippet above, so a full example would look like this.

apex.region("MYIG1").call("getActions").set("edit", true);

$("html, body").delay(500);

$("html, body").animate({ scrollTop: 0 },0);That little bit of delay is necessary, otherwise scrolling will not work. It will also introduce a little bit of “page flickering”, but at least the page focus is back on top when page is loaded.

Tested with APEX 24.2.

- Written by: ilmarkerm

- Category: Blog entry

- Published: December 20, 2024

WARNING! This post contains ClickOps methods.

I have a customer running Autota PostgreSQL in AWS and they wanted to monitor and alert certain database users on how much they read rows from the database.

For auditing PostgreSQL database there is a extension pgAudit, which is also by default installed in Aurora PostgreSQL. You can read about how to set it up for your AWS database here.

To enable auditing only on a specific user

ALTER USER "ilmar.kerm" SET pgaudit.log TO 'all';

ALTER USER "ilmar.kerm" SET pgaudit.log_catalog TO 'off';

ALTER USER "ilmar.kerm" SET pgaudit.log_parameter TO 'on';

ALTER USER "ilmar.kerm" SET pgaudit.log_rows TO 'on';For my use case, the important bit is also to turn ON pgaudit.log_rows.

After that is done, pgAudit will emit a log record into the main PostgreSQL text log, that looks like this:

2024-12-18 02:25:44 UTC:10.20.30.40(53162):ilmar.kerm@egp:[32766]:LOG: AUDIT: SESSION,94,1,READ,SELECT,,,"SELECT id, value, somethingelse FROM ""APPLSCHEMA1"".""TableName""",<none>,42

This audit record tells me that user “ilmar.kerm” executed SELECT id, value, somethingelse FROM “APPLSCHEMA1″.”TableName”, and that query returned 42 rows.

I now need to extract that value 42 from these audit rows and turn them into CloudWatch metric values.

Cloudwatch Metrics

To extract metric values from CloudWatch Logs, there are Metric filters, but they can only extract values from JSON or SPACE-DELIMITED log records. The pg_audit record above was COMMA-DELIMITED.

Very recently AWS announced an ability to transform logs (into JSON) on ingestion using pre-built or custom parsers. And the transformed logs can also be used to create Metric filters.

Don’t worry, the transformed log is store in addition to the old format, the old format is still accessible as-is. This ofcourse means that the amount of logs CloudWatch stores for PostgreSQL will double. A thing to keep in mind, and not enable auditing for the busy application users.

NB! The examples below use the dreaded ClickOps methodology, since currently AWS Terraform modules do not support this new feature.

First have to turn on the log transformer:

- Open the PostgreSQL database CloudWatch Log Group /aws/rds/cluster/CLUSTER_NAME/postgresql

- Navigate to Transformer and click Create transformer

- Choose a parser: Postgres

- Press Add processor

- Processor: CSV Source: body Delimiter: , Quote character: “

- Under Columns it would be possible to give each column under body a proper name, but I’m not going to, since the transformations apply to all PostgreSQL log records, not just pgAudit.

- Press Save

Here is how the transformer settings look and also how a new transformed audit record will look like.

Every field is beautifully split into JSON attributes that we can extract for metric values. This is what we’ll do next.

Creating a metric to count all rows affected by audited operations.

- Navigate to Metric filters and press Create metric filter

- Create filter pattern: { $.body.column_1 = “AUDIT: SESSION” }

- Enable metric filter on transformed logs: ON

- Next

- Filter name: write something yourself

- Metric namespace and Metric name – write something yourself, this is how the metric will be named under CloudWatch Metrics

- Metric value: $.body.column_10

- Unit: Count

- Dimension name: operation, Dimension value: $.body.column_4

Something to keep in mind – auditing COPY command

pg_audit always reports that COPY command returns 0 rows. I would alert on all executed COPY commands.

To just count the COPY operations executed, create a new metric filter.

- Navigate to Metric filters and press Create metric filter

- Create filter pattern: { ($.body.column_1 = “AUDIT: SESSION”) && ($.body.column_4 = “READ”) && ($.body.column_5 = “COPY”) }

- Enable metric filter on transformed logs: ON

- Next

- Filter name: write something yourself

- Metric namespace and Metric name – write something yourself, this is how the metric will be named under CloudWatch Metrics

- Metric value: 1

- Unit: Count